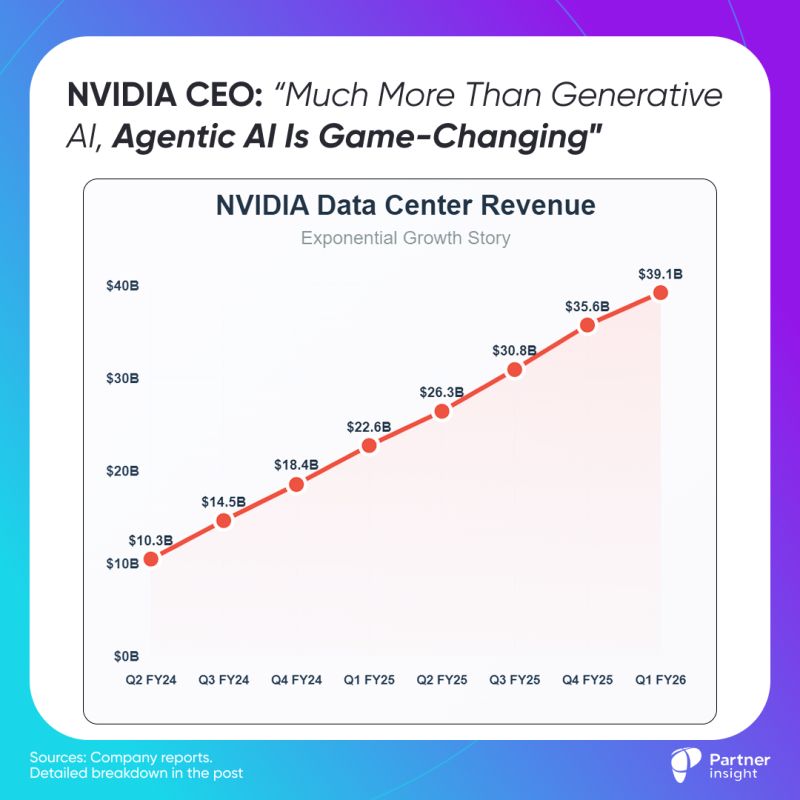

While headlines focused on another revenue beat, the real story for alliance leaders lies in what Jensen Huang called "positive surprises" accelerating AI adoption since GTC.

Enterprise AI agents have crossed the effectiveness threshold

"Agents work... Much more than generative AI, agentic AI is game changing," Huang emphasized. "Agents can understand ambiguous instructions and problem solve using tools and memory."

This validation from the company powering most AI infrastructure signals enterprise deployment is moving to production at scale.

Inference demand has exploded beyond projections

Microsoft alone processed 100 trillion tokens in Q1 – a 5X increase year-over-year.

"We are witnessing a sharp jump in inference demand. OpenAI, Microsoft, and Google are seeing a step function leap in token generation," CFO noted in last week’s call.

Reasoning AI "requires hundreds to thousands of times more tokens per task than previous one-shot inference” fundamentally changing AI deployment economics. Where chatbots used minimal compute, reasoning agents think step-by-step and solve complex problems – driving what NVIDIA calls "a step function surge in inference demand."

NVIDIA's partnerships offer a masterclass in strategic alliance building

While partnering deeply with hyperscalers on AI infrastructure, NVIDIA simultaneously competes with them, becoming an infrastructure company itself. "AI is also an infrastructure," the CEO noted, comparing it to electricity or internet.

The company's ability to balance these relationships demonstrates creating mutual and customer value even amid competition. In the end, it's the customer who decides where to buy and developers pick where to build and deploy.

This AI acceleration is set to boost cloud & marketplace adoption

I think, the inference explosion will create massive pull toward hyperscalers, where enterprises already store data and run core systems. Since reasoning AI agents need seamless access to proprietary datasets and enterprise frameworks, companies will naturally build where their infrastructure already is.

When enterprises need to deploy and build reasoning agents at scale, they'll turn to cloud marketplace solutions that scale instantly. The technical complexity of building reasoning multi-agent AI future makes hyperscaler platforms the logical foundation.

NVIDIA hedges both directions (cloud and on-prem), recognizing data sovereignty realities

Huang emphasized "so much data is still on-prem. It's really hard to move all of every company's data into the cloud." NVIDIA's new RTX Pro enterprise servers target the $500B on-premise IT infrastructure that can't easily migrate sensitive data to public clouds.

Latest Insights & Analysis

We help our clients to define customer-centric strategies that stimulate innovation and create value